A customer of mine had some problems with a vCenter (vCSA 6.5) that didn’t want to boot up anymore. First thing I checked was if I could log in on the vCenter Appliance Management Interface (VAMI). Unfortunately both the webclient as VAMI was unresponsive. This was also the case with SSH.

So the last thing that I could do, was checking the log files of the vm and to open up the console.

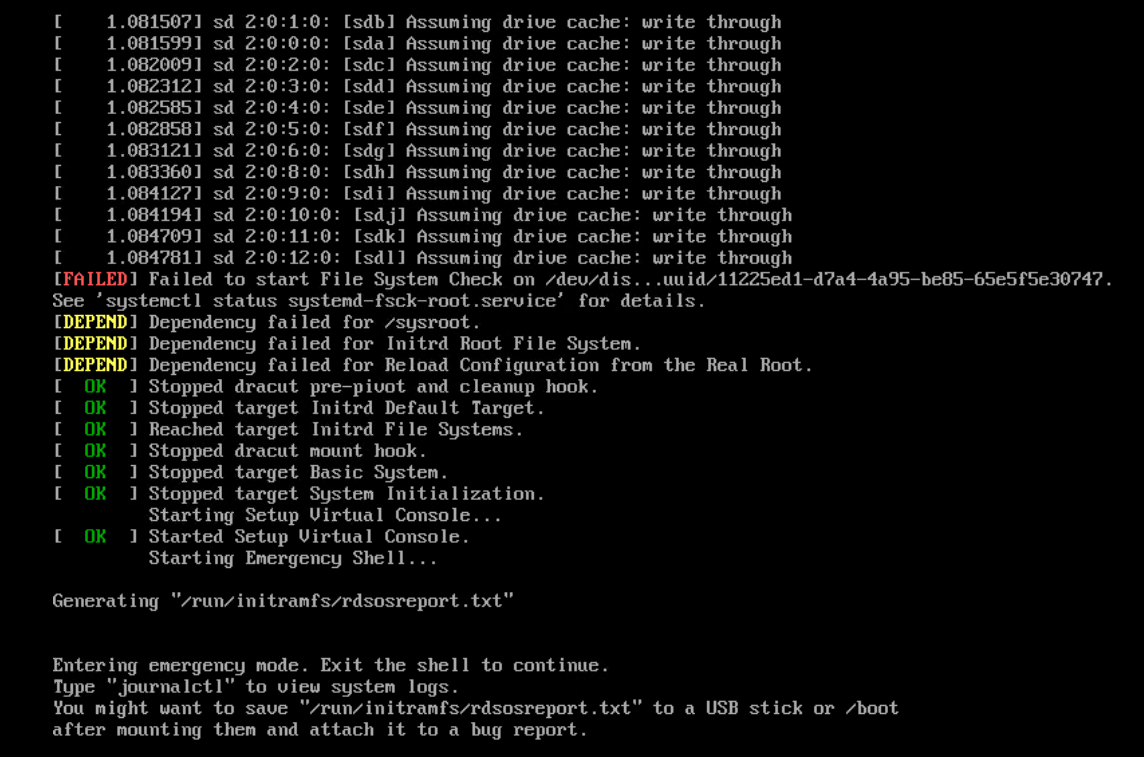

Once I was logged into the console, I saw this.

From the first look of it, it seemed that the problems and errors that the vCenter showed came from a network or storage outage. Since I saw quite some messages that indicated a corruption within the data. The biggest hint was the

“Failed to start File System Check on /dev/dis..”

So, I started with a filesystem check. In the beginning the shell or command line hardly worked, so I had to reboot a few times before I was actually able to sent the command.

I did the filesystem check using the command “fsck /dev/sda3“. The other Sda’s were good.

After the check, and very often typing yes (for sectors to be repaired), I could finally work a little bit more again. Unfortunately, there were so many problems that the basic commands didn’t seem to work. The vm didn’t even understand a simple reboot command so I had to use the reset button to reboot. After the reboot I got the next screen.

After the first filesystem check, and ignoring some errors, I ended up in emergency mode. After I logged in with the root credentials of the vCSA, I was finally able to manage the vCSA a little bit more. What I noticed was, that when you look closely you can see between the 3 failures, 1 failure called:

“Failed to start file system check on /dev/log_vg/log “.

Before I solve that, let me quickly give you some background information.

In short, from version 6.0 onwards, the vCSA uses LVM (Logical Volume Management). Very useful for extending disks or moving data as it is a lot more flexible and can usually be done while the vm is running. Where before the command gparted was much more difficult to use when storage was in use. However, LVM consists of 3 important components. Physical Volumes, which is actually the real storage space, or HDD / SSDs, simply put. In a vm this is the vmdk. Then you have Logical Volumes, which is very similar to the partition of Windows, however this can be spread over multiple Physical Volumes. Then you have as one of the last components, the Volume Group, which is actually a collective name / group that manages all PVs & LVs. Basically, one VG is sufficient for a standard system, but VMware apparently has a 1 on 1 relationship with logical Volumes. So every Logical Volume also has 1 Volume group.

So going back to the error. What we see is that on Logical group Log (the partition where VMware drops log files) has problems starting a File System Check. This indicates that there is still some corruption in it. The Logical group is located in the volume group Log_vg.

These entries are kept in /dev/mapper

All folders under /dev/mapper are Volume Groups and the subfolder underneath it is a Logical Volume. Our problem is in the folder /dev/mapper/log_vg/log

We can view and check this with the command

fsck /dev/mapper/log_vg-log

*Note: See how the slash / turns into a dash – between the vg and lg.

(/log_vg/log , becomes Log_vg-log)

After this last check, everything was repaired again, and a simple reboot command worked. After that the vm came up without any problems.

So In the end the problem was a corrupt filesystem / data, which could be solved with a simple File System check.

If you want to check other Logical Volumes for inconsistency or errors next time,I suggest you use the following commands to list them up.

pvdisplay -m

Checks the Mapping between VG, PV & LV

vgdisplay

Shows information about the volume groups (VG), as mentioned before, by default each vg has a 1 to 1 relationship with the LV. This is specific within VMware’s vCSA.

lvdisplay

shows information about the logical volume (lv)

If you want to know more of this topic, check out this awesome post of Cormac hogan. Who also made me realize that the vCSA has this 1 on 1 relationship between “The Volume Group” and “Logical Volume”.

I hope that this helps you a bit with troubleshooting, and that you know now of how to troubleshoot this in the future.

Samir

Samir is the author of vSAM.Pro & a Life enthusiast who works as a consultant in the field of IT. With a great passion for Tech & Personal Development, he loves to help people with their problems, but also inspire them with a positive outlook on life.

Besides that, he is also a big Sport & Music junky that loves to spend a big chunk of his time on producing music or physically stretching himself.

Thanks a lot Samir. this was exactly my issue. Atfer fsck on /dev/mapper/vlog_vg-log, the system came back up.

Happy to hear that the article helped :).

Thanks for letting me know and have a great day

Thank you for breaking down VMWARE’s unique implementation of LVM in regards to PV/VG/LV, quite unique. Helped solve the failure to boot. Actually was the log volume as well.

No problem, for some reason I never saw this comment. So sorry for the late response, and happy to help